Sounding Off

The Virginia Center for Computer Music is Making Noise

The theme of the Virginia Center for Computer Music’s (VCCM) annual Technosonics concert was deceptive. The word “Noise” blared across the program’s cover, suggesting the audience was in for a bumpy electronic ride. Yet when the first performer, Kojiro Umezaki, took the stage, he delivered a gentle and haunting improvisation on the shakuhachi, a traditional Japanese bamboo flute, accompanied by a computer program that responded to his playing with the tranquil sounds of wind and bells. Clearly, the VCCM was out to change people’s minds about what kind of noise emerges when musicians and computers collide.

“I think when people hear the terms ‘computer’ and ‘music’ conjoined,” says VCCM founder and UVA music professor Judith Shatin, “their eyes glaze over, and they think that what you’re creating is bleeps and bloops.”

Tall and elegant, Shatin is the antithesis of the scragglyguy-hunched-over-a-keyboard-in-a-darkened-room stereotype. She and her colleagues, Matthew Burtner and Ted Coffey, want to dispel a common misperception that technology aims to replace acoustic instruments. At the VCCM, they’ve never met a sound they didn’t like. Whether it’s acoustic, electric or organic, all sound offers creative potential, they say. Their collective open ear hears a sonic continuum, where acoustic instruments and computers work in tandem to engage people with the world around them—and it’s vaulted the VCCM to the top tier of music technology programs, with its applicant pool and cutting-edge research comparable to better-funded programs, such as those at Princeton and Columbia. With faculty that regularly win international music competitions and major commissions and courses that generate long waitlists, it’s a small operation with a big reputation.

Hum

Judith Shatin remembers well her first encounter with digital music. A graduate student in composition at Princeton, she was attending the Aspen Music Festival in the mid 1970s when she encountered an early Buchla synthesizer. Shatin was intrigued, but says the difficulty of pursuing computer music in “the days of the old mainframes” was daunting.

She focused on composing acoustic music for the remainder of her doctorate and through her first eight years at UVA. But when personal computers began gaining popularity in the 1980s and the University offered equipment to qualified faculty for research projects, Shatin leapt at the chance to explore how computers might expand theoretical issues within composition.

“It was really learning a lot by jumping feet first,” she says.

At the time, the technological backbone of computer music, called Musical Instrumental Digital Interface (MIDI), was in its infancy. Professors at only a few universities, such as Columbia, Princeton and Stanford, were pursuing research. Still, Shatin recalls, when she established the VCCM in 1987, “I understood somehow that this was going to be a huge development in the arena of music.”

Today, sitting amid the 10 Linux and Macintosh workstations in the VCCM’s classroom and research facility in Old Cabell Hall, Shatin smiles. “We started with a couple of Mac SEs, a Mac II and a synthesizer, and we thought we were really wonderful at that point.”

Buzz

The nascent computer music center quickly gained recognition, winning grants and University awards for academic excellence, but Shatin says the equipment quickly became outdated “in what began to feel like a nano-hour.” She recalls offering classes in the mid 1990s at the engineering school because it had better computers. “We were always on the lookout for new opportunities,” she says. “We’ve been great at scrounging equipment.”

Meanwhile, Shatin’s personal research produced compositions combining acoustic instrumentation with computer-enhanced environmental noises, such as animal voices or the sounds of a working coal mine. Her first interactive computer music installation, “Tree Music,” commissioned for an exhibit at the UVA Art Museum, captured the sounds and rhythms of sculptor Emilie Brzezinski’s tools as she axed and chiseled the wood. One of her newest projects is “Rotunda,” a collaboration with filmmaker Robert Arnold. For this piece, Shatin is creating music from recordings capturing the resonance of the Dome Room, of people speaking about the Rotunda and of sounds made in the vicinity of the Lawn.

“I love using technology to embrace the organic,” she says. “Working with the sounds of the world can lead one to experiences one never anticipated.”

This open-ended approach to musicology caught the attention of Matthew Burtner, who was in a more traditional program at Stanford, developing his celebrated, techno-enhanced Metasaxophone. Burtner, who grew up playing sax on the back of a fishing boat in rural Alaska, was looking for a place where he could pursue his interests in ethnomusicology, acoustics and computer technology. He joined the VCCM in 2001, and among the numerous works he’s composed—often involving dance and visual art—are several that explore native Alaskans’ experience of the natural world, such as when the snows begin to melt in spring.

Together, Shatin and Burtner initiated a new Ph.D. program in composition and computing technologies and, in 2002, the first class of three carefully selected graduate students arrived, attracted by Shatin’s and Burtner’s international reputations.

Boom

Five years on, the VCCM is at the top of its game and its research projects are as varied as they are innovative. Grad student Peter Swendsen is working with interactive environments for dancers; fellow student Troy Roger has devised robots capable of performing music; and Juraj Kojs, a classically trained pianist, has created computer compositions for traditional Slovak folk instruments. “The graduate students are totally stimulating to me,” says Ted Coffey, a composer with a background in production who joined the VCCM faculty in 2005.

With only three faculty members and one technology support specialist, offering a full range of courses can be challenging, especially with today’s techno-savvy undergraduates ravenous for all things digital. “We have more students than we can handle,” says Shatin. “They have to line up.”

Last fall, Burtner’s Technosonics course, which requires students to post blogs and deliver their assignments via podcasts, had an enrollment of 120, with the same number relegated to the waitlist. “No one is teaching composition courses that large,” says Burtner. “I think it’s great that the University of Virginia is supporting that.”

Other courses have students building their own instruments and creating “sonic ecologies.” Last year, for Coffey’s course in music and technology, he had students simultaneously playing music they’d jointly composed as they walked around Grounds carrying CD players, a piece Coffey dubbed “Boom Box Amoeba.”

Every year, a Technosonics concert brings VCCM faculty and guest artists together to hear each other’s work. They also combine forces to present Digitalis each spring, which showcases student compositions. But Shatin, Burtner and Coffey all point out that the absence of a recording studio and performance space wired for digital technology severely limits the VCCM’s potential. Their ideal vision: a digital arts facility that would enable those working in different mediums—music, art and theater—to collaborate on a whole new level.

For those who still think computer music is about “bloops and bleeps,” Shatin has a few words: “It’s not about whether it’s digital or acoustic—it’s about the imagination of the creator.”

Sax Drive

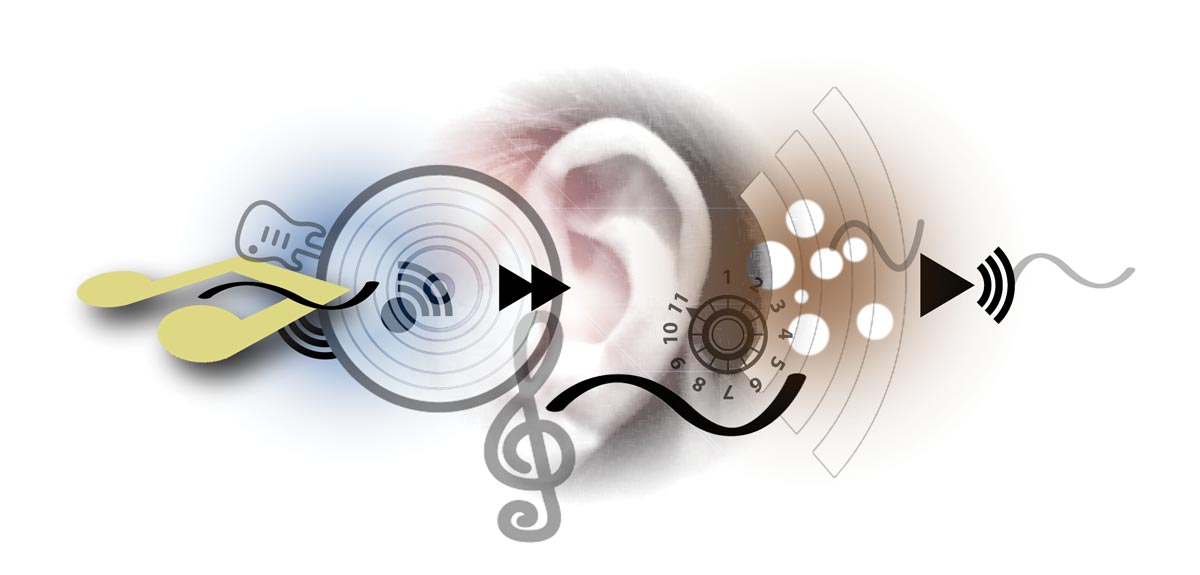

It all started with aching hands. In 1997, after repeatedly playing his compositions “Incantation S4” and “Split Voices,” saxophonist Matthew Burtner noticed his clenched hands and realized his fingers’ pressure on the keys had intensified as the music progressed, although the elongated notes’ sounds remained the same throughout. That sparked an idea: Why not capture the tactile variation by outfitting each key with a computerized sensor? Burtner also decided to add other motion sensors to the body of the sax, and in 1999, the Metasaxophone was born—an acoustic instrument with technologically enhanced performance capabilities.

Burtner says composing for the Metasaxophone requires a shift in approach. “You compose the choreography,” he explains, “because all your movements are creating sounds.” He describes how the angle of the instrument lets the computer know how to respond; for instance, positioning the sax at a 45-degree angle might alert the computer to mimic the instrument’s being held in high wind.

Since inventing the first Metasax, Burtner has built two more. “Every time I make one,” he says, “the technology improves.” The second was commissioned by the University of Arizona, which viewed it as a way to analyze students’ performance techniques. “That had never occurred to me,” Burtner admits.

Recently, the saxophone manufacturer Selmer summoned Burtner to Paris to discuss creating a reproducible version of the Metasaxophone. “So now I’m developing a method, a Metasax method, for the everyday,” he says, noting happily, “That was my vision all along—that this would be something players could use.”