Do the Robot

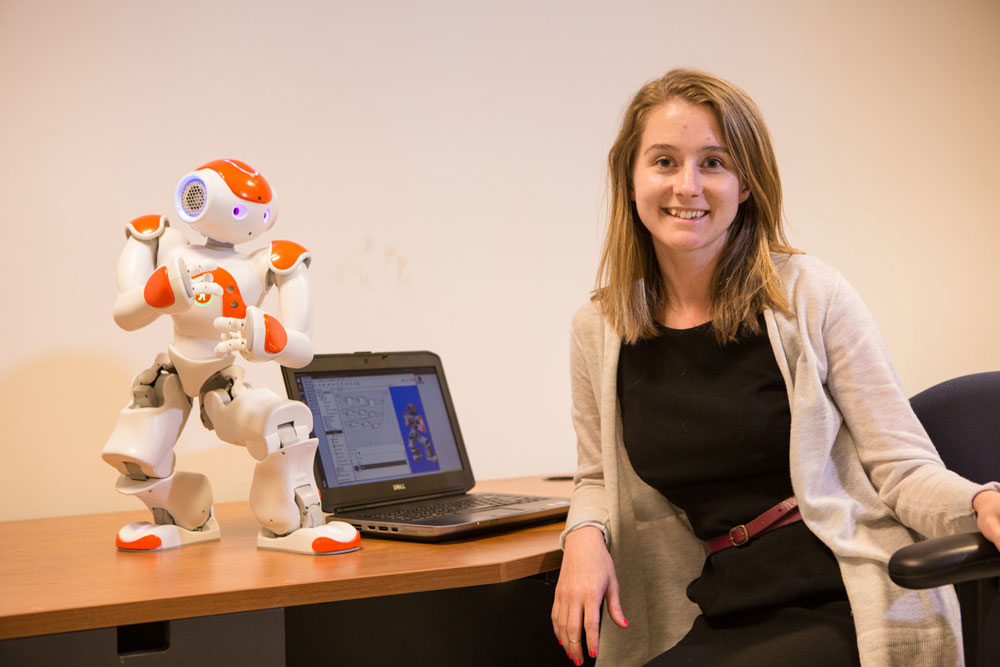

You may encounter dancing robots in the lab of engineering professor Amy LaViers, who studies human movement to improve robotic applications.

In her lab in UVA’s Olsson Hall, Amy LaViers, assistant professor in systems and information engineering, can make robots dance. But this is no parlor trick. What LaViers, who arrived at UVA in early 2014, really wants is to develop algorithms for robots that are informed by the high-level behavior of human beings, because the real-world implications are vast. That they can dance is just icing on the cake.

Dance is a discipline LaViers knows from years of lessons and performances. Growing up in Kentucky and Tennessee, she joined the Tennessee Children’s Dance Ensemble and then later a modern dance company in high school. At Princeton, she minored in dance and majored in mechanical and aerospace engineering. In her junior year there she had an epiphany of sorts when she watched a video of Twyla Tharp talk about her choreography.

“She described the home position for both modern dance and ballet,” says LaViers. “In ballet, you stand with heels together and toes out—when you pick up one foot or the other, your weight is distributed such that it’s easy to step right or left. But in modern dance, your feet are under your hips and parallel to each other. In order to pick up a foot, you really need to create a weight shift to facilitate that step. Tharp described what she called these ‘inherently different stabilities’ leading to very different trajectories of movements.”

As LaViers watched, she realized that human movement was something she could analyze quantitatively with math. “I could combine my interest in engineering to bring quantitative tools to supplement this discussion that goes on in dance,” she says. Today, she studies the structure of human movement to better program robots. “Dance is curated movement,” says LaViers, “It’s movement that’s already codified and highly organized. This is a good place to start when thinking about libraries of movements for robots.”

In her Robotics, Automation, and Dance (RAD) Lab at UVA, LaViers conducts research using two distinctive robots. One is a nearly two-foot-tall Aldebaran NAO robot and the other is a Rethink Robotics Baxter robot that, with its wheeled platform, tops 6 feet. LaViers and her students also work with a motion-capture system to study and sequence human movements and modulate them for the robots.

“Many people have tried to make robotic movements look more human, but it’s more than that” says LaViers. “It’s understanding the structure in human movement and the way we organize it to make robots that exhibit more complex, higher level behaviors.”

She offers the example of an automobile plant, where robots on the assembly line, using low-level controllers, make the same motions to do the same tasks over and over again. “This motion is precise, often forceful, and very repeatable,” she says. “But what if we want to vary that motion or we want to sequence movements for different, more complicated tasks? That’s where we move into this high-level area. Many people are working to try to understand how to do this, but far fewer are studying the way people move for inspiration.”

LaViers’ lab buzzes with both artificial and human intelligence. The NAO robot marches around the room, its eyes lighting up. A first-year student, Brooke Dickie (Engr class of ’18), tests out the lab’s motion-capture system, wearing a black body suit covered in reflectors. Cameras around the room pick up her movements and transfer her form to a screen, where the sequence of her movements can be analyzed. Dickie is an engineering student, but like LaViers, has a background in dance. “I find that people who study science but have an interest in the arts are everywhere,” LaViers says. “I’m really interested in building a community of people who look at the world in this interdisciplinary way.”